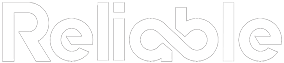

The dashboard is green. Every bar chart points up. The monthly report lands on the plant manager’s desk with a satisfying row of targets met. And yet, somehow, the maintenance crew just pulled its third consecutive weekend of emergency repairs. If that disconnect sounds familiar, you’re likely dealing with maintenance KPIs that hide real problems behind a wall of favorable-looking numbers.

This happens more often than anyone in leadership wants to admit. The metrics say one thing. The plant floor says something else entirely.

Why Maintenance KPIs That Hide Real Problems Are So Common

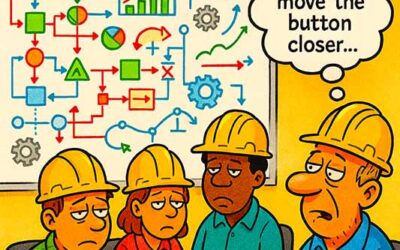

Most maintenance KPI frameworks weren’t designed by the people who turn wrenches. They were designed by consultants, software vendors, or corporate teams who needed something to put in a quarterly review. The result is a set of metrics that are easy to calculate, easy to present, and dangerously easy to game.

Take PM completion rate, the most popular maintenance KPI in existence. A plant reports 95% PM completion. Impressive, right? But dig into what “completed” means and the picture changes fast:

- PMs marked complete after a visual walk-by with no actual inspection performed

- PMs with half the checklist items skipped because the equipment was running and operations wouldn’t release it

- PMs rescheduled three times before finally getting done (late, but technically completed)

- PMs where the technician spent ten minutes on a task that should have taken ninety

A 95% completion rate built on that foundation tells leadership exactly nothing about equipment health. It tells them the CMMS was updated. Those are very different things.

A metric that rewards checking a box will always produce checked boxes. Whether the work behind that check is real depends entirely on what you choose to verify.

The same pattern plays out across nearly every standard maintenance KPI. Mean time between failures (MTBF) looks great until you realize nobody is recording short stoppages. Schedule compliance hits 90% because the schedule was soft-loaded with easy jobs. Backlog age drops because someone bulk-closed old work orders during a system cleanup, not because the work got done.

Each of these metrics, taken at face value, paints a rosy picture. Together, they create an environment where leadership genuinely believes the maintenance program is performing well, right up until a catastrophic failure forces a plant shutdown.

The Five Most Misleading Maintenance KPIs

Some metrics deserve more scrutiny than others. These five are the most common maintenance KPIs that hide real problems, and they show up in almost every plant’s monthly report.

1. PM Completion Rate Without Quality Audits

As described above, this metric measures activity, not effectiveness. Without periodic PM quality audits (where a supervisor or reliability engineer physically verifies the work was done to standard), completion rate is an honor system.

The fix: audit 10% of completed PMs each month. Check that all steps were performed, all readings recorded, and all findings documented. Track the audit pass rate alongside the completion rate. If completion is 95% but audit pass rate is 60%, you know exactly where the gap is.

Completion rate answers whether the work order was closed. Audit pass rate answers whether the work was actually done. Most plants only track one of these.

2. Planned vs. Reactive Maintenance Ratio

The gold standard target is 80% planned, 20% reactive. Many plants report numbers in that range. Fewer can defend how they categorize their work.

Common games that inflate the planned ratio:

- Reclassifying emergency jobs as “urgent planned” after the fact

- Writing a work order mid-repair and calling it planned because paperwork now exists

- Counting only true breakdowns (motor seized, pipe burst) as reactive, while treating everything else as planned regardless of how much advance notice the crew actually had

A meaningful planned percentage requires clear, enforced definitions. Planned means the job was identified, scoped, parts were staged, and it was loaded onto a weekly schedule before execution. Anything else is some shade of reactive, and the metric should reflect that honestly.

3. Mean Time Between Failures

MTBF is a powerful metric when it’s accurate. The problem: most CMMS implementations only capture failures that generate a work order. Short stoppages, operator-performed resets, and quick fixes by maintenance techs who don’t bother writing it up all disappear from the data.

The result is an MTBF that looks longer (better) than reality. A pump that trips weekly but gets reset in five minutes each time might show zero failures in the CMMS. Meanwhile, each trip damages the motor windings incrementally, and the eventual burnout looks like a “sudden” failure that nobody saw coming.

The failures you track are only a fraction of the failures that happen. MTBF based on incomplete data produces confidence, not accuracy.

4. Maintenance Cost as a Percentage of RAV

Replacement asset value (RAV) benchmarks are everywhere. “Best in class” maintenance spending is typically cited as 2-3% of RAV. But RAV itself is a moving target. Some plants use original purchase price (which understates current value). Others use insured value (which may overstate it). And the denominator shifts every time a capital project adds or retires an asset.

Even if RAV is accurate, spending 2.5% of it on maintenance means nothing without context. A plant spending 4% of RAV on a well-executed preventive and predictive program is in far better shape than one spending 2% because it deferred every non-critical repair for two years. The lower number looks better on a slide. The higher number keeps the plant running.

5. Backlog in Weeks

Backlog measured in weeks of available labor sounds precise. A four-week backlog is considered healthy. But the calculation assumes every work order in the backlog has accurate estimated hours, and that’s rarely true.

A backlog full of work orders with default one-hour estimates (because the planner didn’t update them) will dramatically understate the true workload. Conversely, a backlog with inflated estimates will look worse than it is. Either way, the number on the report is noise.

A backlog measured in labor weeks is only as accurate as the estimated hours on each work order. If those estimates are garbage, the metric built on them is garbage too.

How to Audit Your KPIs for Hidden Problems

The goal is to create a verification layer that catches the gap between what the metric says and what’s actually happening on the floor. This requires some structured effort, but the payoff is a leadership team that makes decisions based on reality.

Start with these steps:

- Pick your top five reported KPIs and write down, in plain language, what each one actually measures (inputs, calculation, data source)

- For each KPI, list the three most likely ways the data could be inaccurate or gamed

- Design one verification check per KPI: a PM quality audit, a random sample of “planned” jobs checked against the weekly schedule, a comparison of CMMS failure records against operator logs

- Run these checks monthly and report the verification results alongside the KPIs themselves

The verification layer doesn’t need to be elaborate. A reliability engineer spending four hours a month sampling and cross-referencing data will catch the biggest distortions. The point is building a habit of asking “is this number real?” before acting on it.

Building Metrics That Reflect Reality

The solution to maintenance KPIs that hide real problems requires shifting from output metrics (did the work order close?) to outcome metrics (did the equipment get healthier?). A few examples of outcome-oriented metrics worth tracking:

- Repeat failure rate: what percentage of corrective work orders are for the same asset and failure mode within 90 days?

- PM find rate: what percentage of PMs result in a documented finding that generates a follow-up corrective work order?

- Emergency work hours as a percentage of total maintenance labor hours (harder to game than planned/reactive ratio because it uses time, not job count)

These metrics are harder to fake. A low PM find rate means either technicians are performing superficial inspections or the PM task lists are aimed at the wrong failure modes. Either way, it surfaces a real problem that completion rate alone would miss.

Equipment tells the truth eventually. The question is whether your metrics are set up to hear it before the breakdown, or only after. The gap between a green dashboard and a healthy plant is often wider than anyone wants to believe. Closing that gap starts with treating every KPI as a hypothesis, testing it against physical reality, and having the discipline to report what you find.