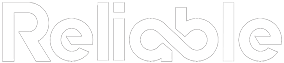

Many maintenance teams want to improve mean time between failures. It is one of the key numbers leadership watches, the figure that shows up in quarterly reviews, and the benchmark that determines whether a reliability program gets more funding or more scrutiny. But chasing a better MTBF number and actually extending equipment life are two very different activities.

The gap between those two activities explains why some plants report impressive gains while their maintenance budgets keep climbing, their technicians keep working overtime, and their equipment keeps breaking in the same predictable patterns.

Why Most Efforts to Improve Mean Time Between Failures Fall Short

For repairable assets, MTBF is an average time between failure events. And like all averages, it can be distorted without technically lying. Restart the clock after every repair. Exclude certain failure categories. Reclassify breakdowns as “operational adjustments.” The number moves in the right direction while the equipment stays in the same condition.

Many facilities track MTBF without a standardized definition of what constitutes a failure. That’s a measurement problem before it’s a reliability problem.

A metric you can inflate by redefining failure will eventually teach your team to redefine failure instead of preventing it.

The most common distortions happen quietly. A technician patches a leaking seal and logs it as a PM task instead of a corrective action. A production supervisor restarts a tripped motor and never submits a work order. Each omission shaves the real failure count, and the mean time between failures ticks upward without anyone making a deliberate decision to cheat.

Defining Failure Honestly

The first step toward legitimate improvement is agreeing on what counts as a failure. This sounds obvious, but most organizations skip it. They inherit a CMMS configuration from a decade ago, and nobody revisits the rules.

A functional failure is any condition where equipment can’t perform its intended function at the required standard. That includes:

- Complete stoppages (the obvious ones)

- Performance degradation below acceptable thresholds (running, but poorly)

- Safety or environmental limit exceedances, even if production continues

- Forced derates that require operator intervention to sustain output

Once your team agrees on this definition, every qualifying failure gets logged the same way. The MTBF number might drop initially. That’s the point. You’re seeing reality for the first time, and reality is where genuine improvements to mean time between failures begin.

Honest failure data tells you where your equipment actually stands. Inflated data tells you where your reporting gaps are.

Standardizing failure definitions also exposes chronic issues that were hiding in plain sight. When a pump that trips weekly finally shows up as seven failures per quarter instead of one “ongoing issue,” the business case for replacing it writes itself.

Getting Root Cause Right

Fixing symptoms resets the clock. Fixing causes extends it. That’s the fundamental difference between a number that looks good on a dashboard and one that reflects genuine reliability engineering progress.

Most plants conduct some form of root cause failure analysis on major events. The problem is threshold bias: only analyzing failures that cost more than $50,000 or cause more than 8 hours of downtime. The expensive failures get attention. The cheap, repetitive failures accumulate unchecked.

A facility running 200 assets might see 3 catastrophic failures per year but 400 minor ones. Those minor failures, each costing $500 to $2,000 in parts and labor, add up to more total spend and more total lost production than the big events. They also train the workforce to accept a baseline level of dysfunction as normal.

Applying structured root cause methods to the top 10 repeat offenders (by frequency, not severity) typically yields the fastest gains in mean time between failures. These are problems your team already knows how to find. They just haven’t been authorized to fix them permanently.

Building the Data Foundation for Better Mean Time Between Failures

Reliable MTBF calculations require clean data, which means consistent work order practices across every shift, every crew, and every technician. Three things matter most:

- Accurate timestamps: when the failure was reported, when work began, and when the asset returned to service. Rounding to the nearest shift or logging everything at 7:00 AM weakens the precision your calculations need.

- Correct failure coding: using the CMMS failure hierarchy consistently, with codes specific enough to distinguish between a bearing failure, a lubrication failure, and an installation error that damaged a bearing.

- Complete capture: every event logged, including the quick fixes that “didn’t seem worth a work order.” Especially those.

This discipline takes time to build. Expect 6 to 12 months of coaching, auditing work orders, and feeding results back to technicians before the data reaches a quality level that supports meaningful analysis.

Clean data costs effort up front. Dirty data costs decisions forever.

Once the data is solid, analysis becomes actionable. You can stratify by asset class, by failure mode, by operating context. You can spot the difference between a bearing that fails every 14 months under normal load and one that fails every 6 months because the adjacent process changed and nobody updated the maintenance strategy.

Pairing MTBF With Supporting Metrics

Mean time between failures in isolation is a blunt instrument. Pairing it with a few complementary metrics gives a much clearer picture of equipment health and maintenance effectiveness:

- MTTR (mean time to repair): if your failure interval improves but MTTR also climbs, your equipment is failing differently, perhaps in more complex ways that take longer to diagnose. That’s worth investigating.

- Failure recurrence rate: the percentage of assets that experience the same failure mode within 12 months of a repair. A declining recurrence rate confirms that root cause fixes are holding.

- PM-to-CM ratio: the split between planned/preventive work orders and corrective (reactive) ones. As mean time between failures genuinely improves, the site should generally see less emergency corrective work and more planned work, though the ratio still needs operating context.

Tracking early failure detection effectiveness alongside these metrics also helps. If your condition monitoring program catches a meaningful share of developing failures before they cause functional loss, that’s a leading indicator that your failure intervals may continue improving.

What Sustainable Improvement Looks Like

Real gains in mean time between failures are slow. They compound over years, not quarters. A facility that raises its average from 90 days to 120 days across comparable critical assets in 18 months is likely making meaningful progress. One that claims a jump from 90 to 200 days in a single quarter probably changed its counting rules.

The organizations that sustain genuine improvement share a few habits. They review failure data monthly with cross-functional teams (maintenance, operations, engineering). They fund root cause fixes through the capital budget instead of treating everything as a maintenance expense. They resist the urge to set MTBF targets that incentivize creative accounting.

Most importantly, they treat the metric as a diagnostic tool rather than a scorecard. The number reveals where attention is needed. It measures whether past interventions worked. It highlights assets that are aging out of their useful service window. It does all of these things well, as long as nobody is standing behind it with a thumb on the scale.

Getting honest about your data, your failure definitions, and your root cause follow-through is the path to improvement that actually lasts. The alternative (a better-looking spreadsheet with the same worn-out equipment behind it) eventually catches up with everyone.