Artificial intelligence (AI) and Industrial Internet of Things (IIoT) technologies have been marketed as transformative tools for predictive maintenance (PdM). However, independent studies and field data reveal that a majority of these implementations underperform due to structural, organizational, and data-quality issues rather than algorithmic limitations.

This article provides a grounded analysis of why such projects fail, identifies technical and organizational weaknesses, and outlines pragmatic recommendations for achieving measurable reliability improvements through disciplined system design, data governance, and human-centric AI integration.

Rethinking Predictive Maintenance in the Age of AI and IIoT

The proliferation of AI- and IoT-based maintenance systems has created an expectation of autonomous, self-learning reliability platforms capable of detecting faults before they occur. In practice, these systems often fail to deliver their promised value.

Algorithms don’t limit the promise of predictive maintenance – the readiness of the systems and people behind them limits it.

Surveys by PwC (2018) and McKinsey (2022) estimate that 60–80% of industrial PdM programs underperform or are discontinued within two years. The underlying cause is not inadequate algorithms but rather poor infrastructure, inconsistent data, and a lack of operational readiness.

This article aims to separate realistic capability from marketing hype and to provide guidelines for effective adoption of AI and IIoT technologies within industrial reliability programs.

Common Weaknesses in IIoT and AI Deployments

Data Integrity and Integration Failures

The success of AI models depends on data accuracy, consistency, and synchronization. Many IIoT deployments rely on sensors that are unsynchronized, uncalibrated, or improperly timestamped, leading to phase drift and false signal relationships. As a result, models trained on corrupted data cannot distinguish between normal and faulty states.

Recommendation: Establish data governance protocols before AI deployment. Synchronize timebases, standardize sampling rates, and define validation thresholds for each sensor stream.

Lack of Ground Truth for Supervised Learning

Machine learning classification requires extensive labeled data representing known fault modes. Most industrial environments lack this data due to rare failure events and inconsistent documentation. Consequently, many AI PdM systems cannot differentiate between minor deviations and genuine faults.

Recommendation: Implement unsupervised anomaly detection as a first phase to define ‘normal’ behavior. Transition to supervised classification only after sufficient fault events are verified by subject matter experts.

False Positives and Alarm Fatigue

Excessive false alarms are a leading cause of PdM program failure. High recall but low precision creates alarm fatigue, eroding operator trust. Conversely, overly conservative thresholds miss early-stage defects (false negatives).

Recommendation: Apply cost-sensitive learning to balance detection sensitivity with operational cost. Include human feedback loops to recalibrate thresholds and reduce spurious alarms over time.

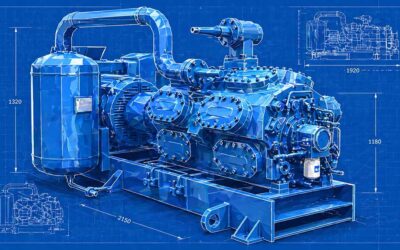

Infrastructure and Sensor Limitations

Wireless vibration and current sensors simplify installation but often sample at low frequencies, missing incipient fault indicators such as sideband harmonics. Data gaps and signal compression reduce analytical resolution, leading to late-stage fault detection.

Recommendation: Use hybrid architectures—wired high-fidelity sensors for critical assets and wireless sensors for non-critical applications. Incorporate edge storage and buffering to mitigate network interruptions.

Organizational Readiness and Cultural Resistance

The greatest obstacle to successful AI adoption is often human, not technical. PdM initiatives frequently fail when technicians and engineers are not involved in implementation. A lack of ownership, combined with early false alarms, results in rapid program abandonment.

Recommendation: Develop multidisciplinary teams involving reliability, operations, and IT staff. Provide ongoing training on interpreting AI outputs and integrating results into maintenance workflows.

Realistic Expectations

Explainable AI (XAI) and Human-Centered Reliability

Black-box AI models are rarely trusted by maintenance teams. Explainable AI (XAI) provides transparency by identifying which features – such as frequency peaks or current harmonics – contributed most to a fault classification.

Tools such as SHAP, LIME, and Grad-CAM allow operators to link AI decisions to known physical indicators, bridging the gap between data science and engineering understanding. Recommendation: Integrate XAI dashboards directly into PdM platforms, allowing technicians to visualize which signals drive each alert. This increases both trust and interpretability.

Recommendations for Sustainable Implementation

- Data Hygiene First: Ensure accurate timestamps, calibration, and metadata consistency.

- Pilot Programs: Begin with one machine class to validate data integrity and model response.

- Hybrid Modeling: Combine physics-based signal analysis with AI pattern recognition.

- Operator Feedback: Treat technicians as co-trainers for the AI system.

- Continuous Model Maintenance: Revalidate models periodically against updated machine conditions.

Redefining Success in Predictive Maintenance Through Reliability Discipline

AI and IIoT technologies have significant potential when grounded in strong reliability engineering practices. Success depends less on algorithmic sophistication and more on data discipline, human collaboration, and realistic expectations.

Predictive maintenance should be viewed not as an autonomous solution but as an evolutionary process – one that augments, rather than replaces, the expertise of the maintenance professional.