Maintenance maturity assessment mistakes cost organizations more than the assessments themselves. A team spends weeks gathering data, scoring categories, and building a picture of where their maintenance program stands.

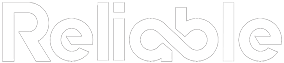

Then someone produces a side spreadsheet, the scores get adjusted, and the whole exercise loses its value. The assessment still gets filed, but the results no longer reflect reality.

This pattern shows up more often than anyone likes to admit. And it’s worth understanding why it happens, because the fix requires more than a better scoring template.

Why Maintenance Maturity Assessment Mistakes Keep Happening

Maturity models work by scoring an organization’s practices against a defined scale, usually from reactive (level 1) through optimized (level 4 or 5). The intent is to produce an honest baseline so improvement efforts land in the right areas.

The problem starts when the assessment becomes a performance evaluation instead of a diagnostic tool. If managers believe their score will be compared to other sites, reported to corporate, or used in budget decisions, the incentive flips. Accuracy becomes less important than optics.

That’s when the shadow spreadsheets appear. Someone recalculates the weighted averages. Someone else challenges the scoring criteria.

A category that came in at level 2 gets bumped to level 3 because “we just started that initiative last quarter.” The final score looks better. The underlying problems remain untouched.

If managers believe their score will be compared to other sites or reported to corporate, the incentive flips. Accuracy becomes less important than optics.

Self-scoring introduces a second layer of bias. Teams consistently overestimate their own maturity, particularly in areas where they’ve invested resources but haven’t yet seen results. They confuse activity with achievement.

A vibration program only delivers value when analysts are trained and routes are optimized. A CMMS only produces reliable data when technicians enter accurate, complete work orders.

The Spreadsheet Problem

Side spreadsheets are the clearest symptom of an assessment gone wrong. When someone feels the need to create a parallel version of the scoring, it usually means one of three things:

- The official scoring criteria are too vague, leaving room for interpretation and argument

- The results threaten someone’s credibility, budget, or narrative about the site’s performance

- The assessment is being used for comparison or ranking rather than improvement

Each of these root causes requires a different response, but all three share a common thread: the assessment process didn’t have enough structural safeguards to protect the integrity of the results.

Effective maturity scoring follows the same discipline as a good root cause failure analysis. You follow the evidence. You document your reasoning.

You let the data tell you where you stand, even when the answer is uncomfortable.

Fixing the Process Before Running the Assessment

The most common maintenance maturity assessment mistakes are process failures. The scoring model might be perfectly sound, but if the wrong people control the inputs, the outputs will be unreliable.

Start by separating the assessment from any performance review or site ranking. Make it explicit: the maturity score is a planning tool designed to guide investment decisions. This single change reduces the incentive to game the results.

- Use third-party or cross-functional assessors instead of self-scoring wherever possible

- Require documented evidence for any score above level 2 (procedures, data trends, completion records)

- Lock the scoring criteria before the assessment begins so they can’t be adjusted after the fact

- Publish the methodology alongside the results so anyone reviewing them understands how the scores were derived

These safeguards don’t eliminate bias entirely, but they make it much harder to inflate scores without pushback.

Another common maturity assessment mistake is treating the exercise as a one-time event. A single snapshot tells you where you are today, but it reveals nothing about trajectory. Without a follow-up assessment using the same methodology, there is no way to know whether improvement initiatives actually moved the needle.

Frequency matters, too. Assessments run less than annually lose their ability to create accountability.

Teams forget the baseline, priorities shift, and the connection between the assessment results and the improvement plan dissolves. Annual assessments with consistent methodology create a feedback loop that keeps the improvement plan grounded in evidence.

Documentation is the other missing piece. Every score should have a written justification that references specific evidence: work order completion rates, schedule compliance data, procedure audit results, or training records. When scores require evidence, the shadow spreadsheet problem largely disappears because there is no room for subjective inflation.

Getting Value from an Honest Assessment

An accurate maturity assessment, even one that produces low scores, is worth far more than a polished one that hides the gaps. Low scores in specific categories tell you exactly where to invest next. A score of 2 out of 5 on planning and scheduling, for example, means the team should focus on building that foundation before chasing advanced analytics or condition monitoring technology.

An accurate maturity assessment, even one with low scores, is worth far more than a polished one that hides the gaps. Low scores tell you exactly where to invest.

Organizations that act on honest assessment results tend to see faster progress because their maintenance planning targets the right gaps. A site that knows it’s at level 2 on scheduling discipline will invest in planner training and schedule compliance tracking. A site that inflated that score to level 3 will skip those fundamentals and wonder why the next assessment hasn’t moved.

The same principle applies to technical capabilities. If your predictive maintenance strategy scored poorly, that’s a clear signal to evaluate training, staffing, and technology gaps before expanding the program.

Watch for another subtle maintenance maturity assessment mistake: averaging category scores into a single number. A composite score of 3.2 hides the fact that planning might be at a 4 while storeroom management sits at a 1.5.

The average looks respectable, but the weak link still breaks the chain. Report scores by category and resist the temptation to roll them up into a single figure that obscures the details.

Run the assessment annually, using the same methodology and the same safeguards. Track the trajectory. The value of a maturity model comes from measuring change over time, and that measurement only works if each data point is honest.

The organizations that get maturity assessments right treat them as mirrors. They want to see what’s actually there, because accurate data is the only foundation for a plan that works.